NOTE: too long for a blog (sorry), but I did want this to be available.

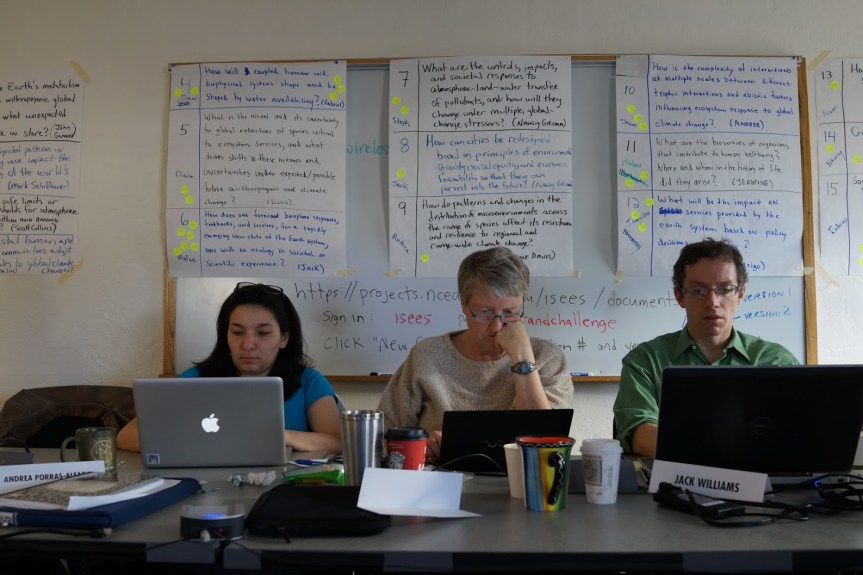

The West Big Data Innovation Hub held its first all-hands-meeting in Berkeley last Thursday. What follows is a short talk I gave to the newly-formed Governance Working Group.

The Hub seeks to become a community-led, volunteer-run organization that can bring together the academy and industry… and that other academy (the one with the statues), and regional and metro government organizations into a forum where new knowledge will be born to build the practices and the technologies for big data use in the western US.

To become this organization it will need to spin up governance. An initial task for the governance working group is to draft a preliminary governance document that outlines the shape of the Hub’s decision space, and the desired processes to enable those HUB activities needed to realize the mission of the organization.

Virtual organization governance is hard. And the knowledge of how to succeed is not well understood. We do know that the opportunities for failure are numerous. Funders will need to exercise patience and forbearance during the spin-up process.

I don’t know of any NSF-funded community-led, volunteer-run organization that can be a model for this governance. I would be very happy to hear about one. It would be great if this Hub becomes that successful organization.

I have three suggestions (with the usual caveats) to help frame the work of this working group.

NUMBER ONE: Your community does not yet exist.

There is a quote attributed to either Abraham Lincoln or Darryl Royal (depending if you’re from Texas or not)… “If you have five minutes to cut down a tree, spend the first three sharpening your axe.”

Community building activities is the hub sharpening its axe.

Right now, when someone talks about the “big data community” that’s just another word for a bunch of people whose jobs or research involve big data. That’s a cohort, not a community. If you want community—and you do want community—you have to build it first. That’s why you need to spend resources getting more people into the process and give them every reason to stay involved.

The first real job of the hub is to build your member community.

Part of building your community is to give your members a stage for their vision of the future. Challenge your members to envision the destination that marks the optimal big-data future for a wide range of stakeholders, then build a model for this destination inside the Hub.

To meld vision with action and purpose and forge something that is new and useful, that’s a great goal: think of the Hub as the Trader Joes of big data. The place people know to go to… in order to get what they need.

NOTE: Why do you actually need community? There’s a whole other talk there…. Community is the platform for supporting trustful teamwork… without it, you will not get things done. Without it emails will not get answered, telecons will not be attended, ideas and problems will not surface in conversations… and meetings will be tedious.

NUMBER TWO: Engagement is central.

ANOTHER QUOTE: Terry Pratchett, the philosopher poet, once wrote: “Give a man a fire and he’s warm for a day. Ah, but set a man on fire and he’s warm for the rest of his life…”

You governance effort should be centered on maximizing member engagement by giving the greatest number of members opportunities to do what they believe is most important for them to do RIGHT NOW. Invite new members to join and then ask them what the hub can do for them. This is not a Kennedy moment.

Your members want pizza… it’s your job to build them a kitchen and let them cook.

Your steering committee (or whatever this is called) needs to be 90% listening post and 10% command center. It needs to listen and respond to members who want to use the Hub to do what they think the hub should do. It needs to coordinate activities and look for gaps. It needs to remind members of the vision, the values, and the mission goals of the organization, and then remind them that this vision, these values, and the mission belong to them and are open to all members to reconfigure and improve.

The Hub needs to be a learning organization with multiple coordinated communication channels… Members need to know their ideas have currency in the organization.

Do not be afraid of your members, but do be wary of members that seem to want to lead without first attracting any followers. Spread leadership around. Look for leadership on the edges and grow it.

Engagement will lead to expertise. Over time, the members will learn to become better members. The organization should improve over time. It will not start out amazing. It can become amazing if you let it.

Each member needs to get more than they give to the organization. If they don’t, then you’re probably doing it wrong. This will be difficult at first, so the shared vision will need to carry people through that initial phase.

Creating a bunch of committees and a list of tasks that need to be finished on a deadline is NOT the way to engage members. If you think that’s engagement, you are probably doing it wrong. YES, some things need to be done soon to get the ball rolling. But remember that volunteers have other, full time jobs.

NUMBER THREE: There can be a great ROI for the NSF

The Hub’s success will provide the NSF with a return on its investment that is likely to be largely different than what it expects today, but also hugely significant and valuable.

Final quote here: Brandon Sanderson, the novelist wrote: “Expectations are like fine pottery. The harder you hold them, the more likely they are to break.”

The hub is NOT an NSF-funded facility, or a facsimile of a facility…

Unlike a facility, the NSF will not need to fund a large building somewhere and maintain state-of-the-art equipment. The NSF already funds these facilities for its big data effort. The Hub is not funded to be a facility and will not act like a facility.

The hub is also not just another funded project…

Unlike a fully funded project, the NSF will not be paying every member to accomplish work in a managed effort with timelines and deliverables.

Volunteers are not employees. They cannot and should not be tasked to do employee-style work. They have other jobs. The backbone coordination projects for the hubs and spokes are paid to enable their volunteer members to do the work of volunteers. The Hub is not a giant funded project. It will not work like a giant funded project. It cannot be managed. It must be governed. This means it needs to govern itself.

Self governance is the biggest risk of failure for the hub. That’s why the work you do in this working group is crucial.

Self governance is also the only pathway to success. So, there is a possible downside and potentially a really big upside…

Remember that process is always more important than product. You may need to remind your NSF program managers of this from time to time.

The Hub needs to take full advantage of the opportunities and structural capacities it inherits as a community-led, volunteer-run organization. It’s goal is to be the best darn community-led, volunteer-run organization it can be. Not a facility and not a big, clumsy funded project.

Here are Seven Things the NSF can get only by NOT funding them directly, but through supporting the HUB as a community-led virtual organization of big-data scientists/technologists:

1. The NSF gets to query and mine a durable, expandable level of collective intelligence and a requisite variety of knowledge within the HUB;

2. The NSF can depend on an increased level of adoption to standards and shared practices that emerge from the HUB;

3. The NSF will gain an ability to use the HUB’s community network to create new teams capable of tackling important big-data issues (also it can expect better proposals led by hub member teams);

4. The NSF can use the HUB’s community to evaluate high-level decisions before these are implemented (=higher quality feedback than simple RFIs);

5. Social media becomes even more social inside the HUB big-data community, with lateral linkages across the entire internet. This can amplify the NSF’s social media impact;

6. The Hub’s diverse stakeholders will be able to self-manage a broad array of goals and strategies tuned to a central vision and mission and with minimal NSF funding; and,

7. The NSF and the Hub will be able to identify emergent leadership for additional efforts.

Bottom Line: Sponsoring a community-led, volunteer-run big data Hub offers a great ROI for the NSF. There are whole arenas of valuable work to be done, but only if nobody funds this work directly, but instead funds the backbone organization that supports a community of volunteers. This is the promise of a community-led organization.

And it all starts with self-governance…

To operationalize your community-building effort you will be spinning up the first iteration of governance. If you can keep this first effort nimble, direct, as open to membership participation as you can, and easy to modify, all will be good. Do not sweat the details at this point. Right now you are building just the backbone for the organization. Just enough to enable and legitimate the first round of decisions.

Make sure that this document is not set in concrete… it will need to change several times in the next 3-5 years. In the beginning, create a simple process and a low threshold for changes (not a super majority). TIP: Keep all the governance documents on GitHub or something like that. Stay away from Google Docs! Shun Word and PDFs!

Postscript:

Hallmark moments in the future of this Hub if it is successful:

At some point 90% of the work being done through the Hub will be by people not in this room today. The point is to grow and get more diverse. With proper engagement new people will be finding productive activities in the hub. [with growth and new leadership from the community]

At some point none of the people on the steering committee will be funded by the NSF for this project… [this is a community-led org… yes?]…

At a future AHM meeting more than 50% of the attendees will be attending for the first time.